Software Constraints on Westworld’s Season Finale

Software Constraints on Westworld’s Season Finale

Ford must prove android autonomy is stable, and includes obedience, by Bill Softky

By Bill Softky

This post, like a previous one

We theorists love making wildly specific predictions based on very little data. After all, predicting without data is…medium.com

applies deep-seated principles from business and software to find patterns in Big Data. In this case, the Big Data is the nine episodes so far of HBO’s series Westworld, whose Season Finale tonight offers a perfect test-case for theoretical predictions about how the software for the androids will improve over time. Will the series end with software which could actually work in real life?

The central conceit of Westworld is that deep philosophical questions of self-awareness, predictability, and autonomy can be reduced to questions of algorithms and data running inside androids. While Westworld raises these important questions, viewers don’t get many answers. My fellow researcher and I discuss this on video:

https://www.youtube.com/watch?v=VcSLKFYenFg

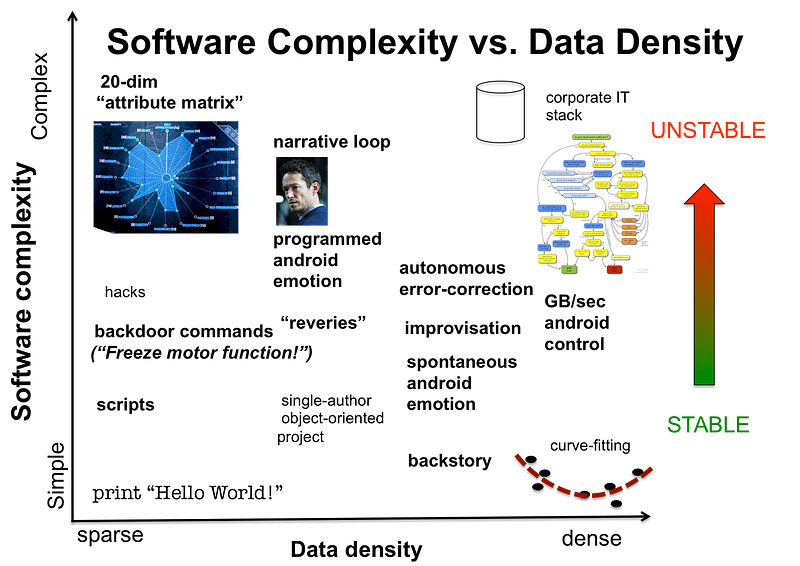

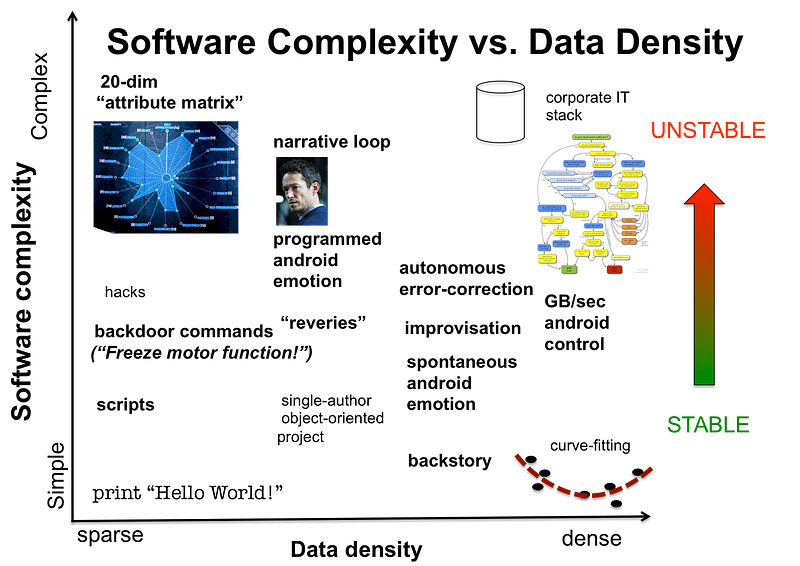

The first problem is, everyone lies (Ford, Bernard, Delores, Maeve), so we can’t take anyone’s word for what’s actually going on. More importantly, what answers we do get are muddled because the android software stack is so confusing. In Westworld, Android software includes pre-programmed memories and backstories, pre-programmed narrative loops and scripts, programmable “attribute matrices” of what this android is good at, and various backdoor command-line access codes and hacks (“Freeze all motor function!”) which together weave a rat’s-nest of entangled code with no obvious guidelines for conflict resolution. But the lion’s share of android actions and internal data comes from autonomy and improvisation, for the simple reason that balance and motor function require on-the-fly corrective feedback loops for every fingertip and eyelid whose details can’t be programmed in advance.

This graphs shows a rough relation between “software” (code, typically in letters) and data (typically numbers). Some software, like curve-fitting programs or motor-control loops, use a simple codebase to fit lots and lots of data (such programs tend to be stable when running). Much software, on the other hand, is mostly instructions with very little numerical data at all, and in general, the longer the program the less stable it is.

Here again theory helps answer questions, because software follows mathematical laws even more slavishly than business does. For example, two principles have to operate in any sensorimotor system, android or otherwise: multi-scale and autonomy. So-called “multi-scale” algorithms are those which work the same on both big and small problems (for example image-compression tricks like JPEG, which break down images into tinier and tiners squares). Multiscale algorithms are the best kind. “Autonomy” is the ability of a program to make its own decisions, which believe it or not means the program is allowed to mix random numbers into its decisions, effectively flipping coins. Autonomy is a mixed bag, because autonomy and programming tug in opposite directions: autonomy creates diversity and variety, while programming creates narrow tracks, loops, and narratives. This is the central tension between Sizemore (who loves programming narratives by hand) and Ford, who knows that only improvisation can auto-generate realistic detail

This is not just script-writing, but the real mathematical tradeoff of high-entropy vs. low-entropy which Ford has been trying to ignore. Autonomy improves androids’ realism and performance, by detecting and correcting “errors” big and small. Whenever Ford or Delos wipe (part of) an android’s memory, or use command-lines to (partly) prevent it from achieving independence, they upset that balance. Westworld literally drives androids crazy by making their internal databases inconsistent.

Wiping memories and command-lines are hacks, not principled solutions to the underlying improvisation-vs-narrative problem, hacks which haven’t really worked for decades. Despite persistent re-booting Bernard has evidently become self-aware before the latest episodes, and early in Westworld’s history there was at least one android uprising. Now one can’t be sure what Ford really “knows,” but as a technologist he ought to know that encouraging low-level autonomy while shutting down high-level autonomy is a recipe for software conflict. Ideally, his “final narrative” cage-match ought to spare androids the frequent abuse, deaths, and resurrections creating the inconsistencies behind the glitches. And so much the better if the new androids run on cleaner, more consistent software. Only one problem left for Ford: if the androids’ “autonomy” does work this time, will androids still obey commands from him and Delos?

Stubbs has already met some androids who don’t respond to “freeze” commands, so it’s possible. But will they still respond to Ford, or to nobody, not even their Delos overlords? Maybe we’ll see tonight.